Leonardo Foletto offers custom software solutions for audio and multimedia applications, audio post production services and creative programming consulting.

HIS areas of work include development of digital signal processing algorithms, live coding, APPLIED Machine learning Solutions for music and sound processing, algorithmic and generative composition, computer-musician interaction, and multimODAL approaches to music composition and performance.

Most of my life has been divided between my two greatest passions: arts and technology. I began engaging with music as a child, playing the saxophone at age 7 and moving to drums at 12. Music has always represented the best way to convey emotions for me. My aim is to recreate the same feeling of awe I experience when listening to a great piece of music or when witnessing an exceptional piece of art in my own work. At the same time, I’ve always loved the sciences and strive to find new ways to use my passion for technology to create art. I found the perfect synthesis of these two key aspects of my life in Berklee College of Music’s Electronic Production & Design major and since then I've been spending my time exploring all the different aspects and facets of the audio technology world. My works space from audiovisuals, generative and algorithmic compositions, machine learning based audio software, hardware hacking, custom software for sound processing and music synthesis, live coding, audio post production, mixing and mastering. Some of the commercial products I have worked on in various roles include: Avid Pro Tools, Voicemod ecosystem of apps and Sound Drive.

PRoducts

Commercial products I’ve worked on

SOUND DRIVE

Sound Drive is a project born from a partnership betweeen will.i.am and Mercedes AMG. It consists of an application running in the car head unit as any other audio player, but the tracks you listen to are remixed in real time based on the signals coming from the car.

In this project, my title has been QA Lead of will.i.am's engineering team. In this role, I contributed to the development of an innovative interactive audio engine integrated into Mercedes' series of electric vehicles. My responsibilities included verifying the quality and performance of the software and it's integration in the vehicles and providing assistance with implementations prototypes for machine learning-driven automation features. I have also been involved in the research and development of a music source separation library and contributed with models and ensembles to achieve incremental quality improvements of our music source separation system.

VOICEMOD

Voicemod is a real-time AI voice conversion platform with an ecosystem of apps and features tailored towards streamers, gamers, rpg players and the like.

At Voicemod I worked as a QA automation engineer, developing python libraries and frameworks to automate the testing of audio quality into Voicemod's audio engine and it's end-to-end integration into Voicemod's desktop line of products.

AVID PRO TOOLS

Avid Pro Tools is an industry leader DAW for music and audio production and a series of control surfaces, audio interfaces and other pro-audio hardware.

At Avid I worked as a QA Engineer, testing new features and releases and designing test cases. Part of my responsibilities also included managing and supporting the community of beta testers.

Together with a couple of other colleagues I also helped spearhead audio AI research and development at Avid, doing some preliminary research (audio event detection and instrument classification) and mentoring audio machine learning research interns.

Software

A selection of personal software projects.

Soir

A Groovebox for the New Nintendo 3DS (work in progress). A demo of the project has been presented at the 2025 Audio Developer Conference. Source can be found on my Github.

Kairos

Live Coding library written in Haskell using Csound UDP server as the audio engine. Ongoing open-source project started as my thesis for the Bachelor Degree in Electronic Production and Design at Berklee College of Music. For more detail visit the Github page and watch the short video presentation showcasing the state of the project at the end of my senior year at Berklee College of Music.

I had the honor of presenting a paper about Kairos at the 5th International Csound Conference. You can read it here and check out the official Proceedings publication here.

You can see some of my performances at the links provided in the Performances & Talks section of my website

A video I did for Umanesimo Artificiale's Live Code Your Track Live video series where I give some insights about my creative process when performing with Kairos

The Sound of AI - Open Source Research

A sampler powered by Machine Learning. Takes a speech input and generates a guitar sample based on the requested tonal characteristics using a Tensorflow implementation of Spectral Modeling Synthesis. It's then possible to explore the latent space of the generated sample, to create new sounds, or simply perform with the instrument using the on-screen virtual keyboard or an external MIDI keyboard. Written in Python, sound engine for the sampler written in Csound. In this project I served as the co-coordinator of the Production Group, responsible of creating the sampler engine, the state machine and UI for the app and the pipeline to build all the machine learning models built by other research groups into the front end. I also took charge of the evaluation of the project, by contacting and interviewing with creative technology professionals and collecting their feedbacks into qualitative and quantitative reports. The project website is available here. The main repo of the project is available on Github

CSOUND OPCODES

A repository of Opcodes for Csound developed in C++.

So far it includes:

Waveloss : a Csound implementation of the Waveloss UGen from SuperCollider

Pan3 : stereo panning algorithms including constant power sin, constant power sqrt and mid/side panning

Wavefolder : a sine / triangle wavefolder with feedforward and feedback

PdOsc : a phase distortion / phase shaping lookup table precision oscillator

Unicomb : a comb filter with independent feedback and feedforward paths

Available on my Github page

Audio Analysis Experiments

A collection of short Jupyter Notebooks and Python files exploring digital signal processing, audio analysis, music information retrieval and machine learning for audio in Python. All the source code is available on Github and some additional interactive demos and models are available on my huggingface profile

lf.gen

An array of quick DSP algorithms packaged as gen~ patchers for quick prototyping in max/msp. The collection include filters, panners and signal generators. Available on my Github page.

Audio Programming for iOs class projects

A number of simple iOs apps developed as class projects. The collection includes an FM synthesizer,a granular delay and a phase vocoder and vocal freezer, together with other audio effects. A selection of them is available on my Github.

Multimedia

Selected works in the field of multimedia art.

DISTANTIA AV

DISTANTIA is a multimedia audiovisual project about the mediated nature of observation blending satellite imagery, virtual spaces and deep immersive soundscapes enriched by AI generated sounds. These elements are weaved together, creating a collection of landscapes viewed from the distant perspective of SENTINEL-2 satellite, processed, analyzed and returned in various types of visualizations, from vast virtual scenarios to distrupted and fragmented pointclouds, inviting viewers into a complex scenario where perception is blurred by the overlap between reality and representation. For this project I worked as applied music machine learning engineer and consultant providing the notebooks necessary to train RAVE models for neural realtime audio synthesis, giving guidance on their use in the studio and for ableton live performances using nn~.

MONAD & SENSE

Multimedia fashion design project in collaboration with Kakan Kudo. I perfomed livecoded music, mixed and mastered it.

CHIAROSCURO

Performance in three acts by Joy Lee that explores the duality of emotions, from dark to light. My roles included performing modular synthesizers and real time processing of the audio from the other players through custom made Max/msp and Csound software.

The video showcases the first iteration of the project, presented on a 10.2 channels sound system with three video screens for projections.

Video of the second iteration of the performance featuring Neil Leonard on saxophone, Aaron Myles Pererira and Kedaar Kumar on Visuals and the dancer Madeline Miller.

DIGITAL FOREST

Multimedia performance produced in collaboration with Nona Hendryx and Will Calhoun. My role in the project was to lead and coordinate a team of students whose objective was to create a website interface through which the audience would have been able to perform and affect the music during the performance, generate the sound effects to be controlled by this web interface, set up the network through which all the performance data was piped and distributed, and regulate how all the teams would use this network to share data with each other based on each team’s needs.

A ROSE OUT OF CONCRETE

Performance produced by Hank Shocklee and Nona Hendryx. This project consisted of a multimedia performance piece featuring 3 projection mapped screens, 15 dancers and 10 music technologists that meant to be an experimental manipulation of the body to produce and control sound, lights and visuals through the use of sensor applied on a group of 15 dancers choreographed by Duane Lee Holland Jr. I was part of the tech team lead by Dr. Richard Boulanger, in charge of developing the devices that, reacting to the gestures of the dancers, manipulated and transformed sounds, visuals and lights. Some of the software I specifically developed for this can be found in software.

SAMSARA

Collaborated on a three-movement multimedia performance realized by A2daC as his final capstone project for the Electronic Production and Design major at Berklee College of Music. My main role in the project was to develop a series of custom Live devices to be used by the artist in the performance. The principal device I created is a Max for Live looper that would allow the player to record and manipulate music on the fly allowing the performer to shape the composition in real time.

visuals: Jacob Johnson | dancer: Erica Codd | videographer: Fen Rotstein

VUU

Prototype of an audio visualizer application for augmented reality. Realized during a class in collaboration with students from M.I.T., Harvard, Boston Conservatory and Berklee. The goal was to prototype an audio visualizer for augmented reality to be used at music festivals and concerts to create a personalized experience of the event for every single attendant.

LIVE VISUAL ART FOR MUSIC EVENTS

I have been working with live audio visuals, using primarily Jitter from Cycling '74, for a wide array of different settings and musical styles: from Djs to live bands to jazz trios.

Post PRoduction

Selected post production projects. My experiences include: mastering, mixing, sound design for linear and interactive media, sound and music editing.

IDN - Kaleidoscope Album Mastering

Mastered IDN's album "Kaleidoscope". You can read a review here.

Premio

Mixed and mastered multiple tracks by Premio

PESCHE DRUM COVER

Mixed and mastered Gianluca Pellerito's drum cover of "Pesche". Done in Pro Tools.

"Knights of the old republic 2" menu sounds replacement

All sounds synthesised with my Eurorack modular synth, recorded and processed in Ableton Live. Audio post production done in Pro Tools.

"ERASERHEAD" SOUND REPLACEMENT

Sound replacement of the final scene from David Lynch's "Eraserdhead" made for a class project using Ableton Live 10. The original sound design is very sparse, with a lot of silence. In my version I tried to play with silence and harsh sounds to convey the feeling of the scene.

"PI" SOUND REPLACEMENT

Sound replacement of a scene from Darren Aronofsky's "PI" made for a class project using Avid Pro Tools and an Avid S6 control surface. In the sound design of this scene, I tried to express the sonic landscape through the ears of the protagonist, trying to represent the headache afflicting the character.

DROIDX COMMERCIAL SOUND REPLACEMENT

Sound replacement of the DroidX commercial. This was a class project. Made with Apple Logic Pro X

"HOPE" MIX

A mix of the song "Hope is a drug" by Gavin Castleton. This was a class project, mixed in Avid Pro Tools on an Avid S6 control surface.

POLYMIA MIX & MASTER

Mix and master for streaming for multiple releases by Polymia.

MASTERING PROJECTS

A few mastering projects from the Mastering course I attended at Berklee. Mastered with Magix Sequoia, most processing with iZotope Ozone, Weiss DS1 and Weiss EQ1

HARDWARE

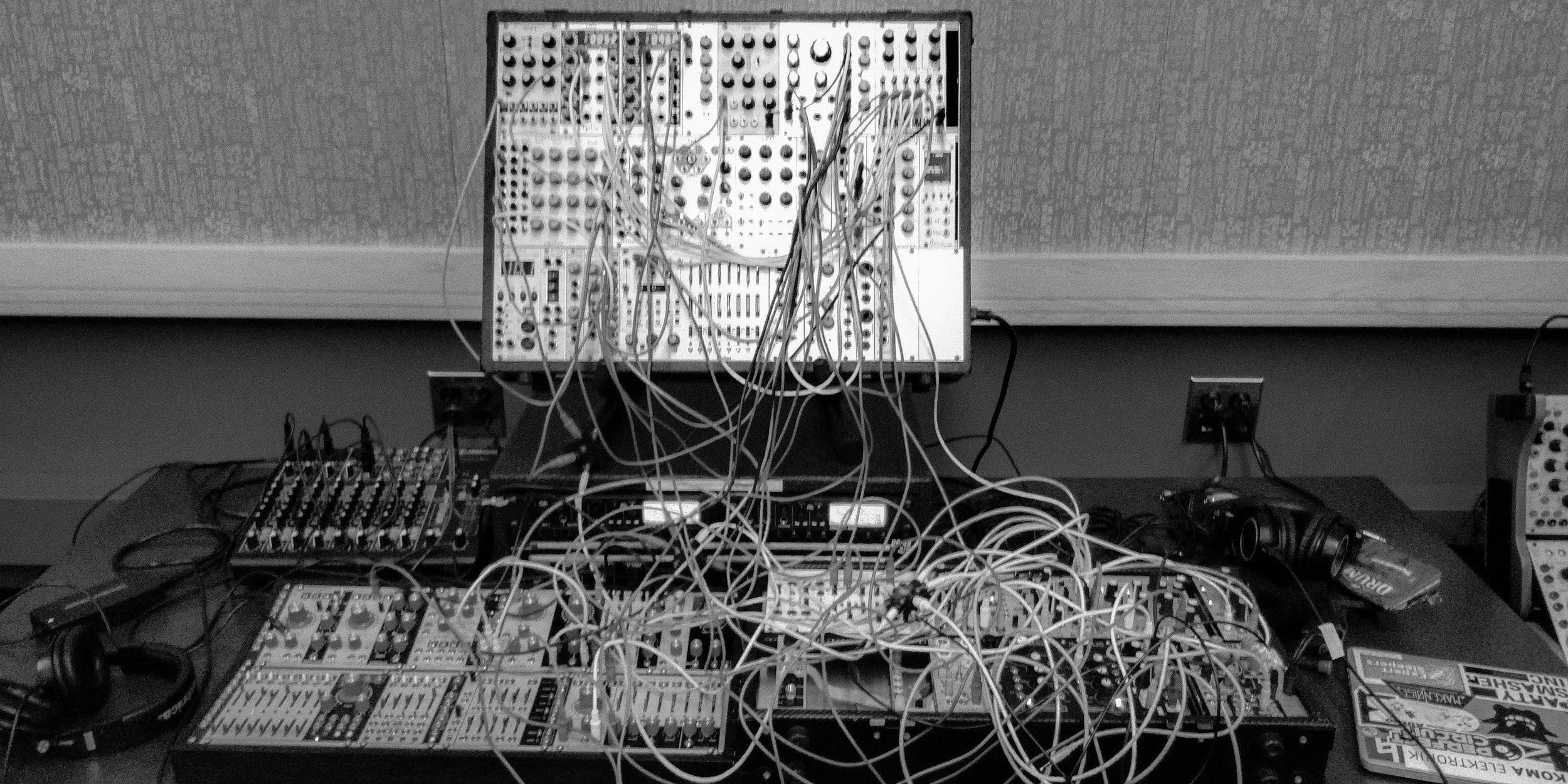

MODULAR SYNTHESIZERS

In the last few years I started getting involved in the Eurorack modular synthesizer community and I quickly got into DIY, building cases, synthesizer modules and circuit bending old toys.

The first Eurorack case I've built.

Performing at Berklee's Modular on the spot with Stefano Genova.

Circuit bent Speak & Math. Started as a class project.

Noise box synth built circuit bending a 555 based soldering kit.

This was a class project for EP-391 Circuit Bending & Physical computing.

Performing Joy Lee’s composition “The Fog“ for the Society of Composers Spring 2019 Concert at Old South Church in Boston.

Performances & talks

A list of selected performances, workshops and talks I’ve given, with related links.

2026:

- 21/03/2026 Equinox - Toplap Italia - Live Coded Performance (Pisa, IT)

2025:

- 11/11/2025 Audio Developer Conference - Live Coded Performance (Bristol, UK)

- 11/11/2025 Hacking Handhelds for creative audio - Building Music Apps for the Nintendo 3DS - Talk presented at the Audio Developer Conference (Bristol, UK and online)

- 06/09/2025 Mirabilia Festival - Live Coded Performance (Cuneo, IT)

2024:

- 01/27/2024 Algorave a Spazio X - Live Coded Performance (Treviso, IT)

2023:

- 25/04/2023 Electro Music Expo - Live Coded Performance (Marghera, IT)

- 26/02/2023 Baitattack! - Live Coded Performance (Trento, IT)

- 28/01/2023 FabLab 10 - Live Coded Performance (Genova, IT

2022:

- 03/12/2022 Algorave a Spazio X - Live Coded Performance (Treviso, IT)

- 04/06/2022 FLASHCRASH - Live Coded Performance (online)

- 14/05/2022 Toplap Berlin Algo10 - Live Coded Performance (Berlin, DE and online)

- 04/05/2022 PiGroZia by Tempo Reale - Live Coded Performance (online)

- 26/03/2022 VIU Festival - Live Coded Performance (Barcelona, ESP)

- 25/03/2022 Csound: Sound Synthesis and Language Extensions - Workshop presented at VIU Festival (Barcelona, ESP)

- 20/03/2022 Algorave 10th Birthday - Live Coded Performance (online)

2021:

- 21/12/2021 Tidal Club Longest Night - Live Coded Performance (online)

- 14/12/2021 Code3 : Toplap Italia x mcQuadro - Talk and Live Coded Performance (online)

- 08/10/2021 Toplap Italia x Codefest 2021 - Live Coded Performance (online)

- September Monad & Sense - Live Coded Performance for multimedia fashion design project

- 18/06/2021 - 06/19/2021 Live Coding e Musica Algoritmica - Two-days workshop on live coding with Sonic Pi, taught together with Nesso and Guiot. Presented at RoBOt Festival for Umanesimo Artificiale (Bologna, IT)

- 18/06/2021 RoBOt Festival - Live Coding Performance for Umanesimo Artificiale (Bologna, IT)

- 03/04/2021 Toplap Italia April's Fools Live Stream - Live Coded Performance with the Toplap Italia community (online)

- 20/02/2021 Transnodal livecode stream - Live Coded Performance joining the Toplap Italia performance block for Toplap's Birthday (online)

2020:

- 09/05/2020 Algorave VR >UXR.zone Cyber Yacht - Live Coded Performance on the UXR.Zone Cyber Yacht organized by Toplap Berlin and Toplap MX

- 04/05/2020 Algo:Ritmi at the Circle VR Club - Live Coded Performance (online)

- 04/04/2020 KAIROS: performing music with Csound and Haskell - Workshop given during the Livecoding In Space event organized by SpacyCloud Lounge DC (online)

- 22/03/2020 EulerRoom Equinox 2020 - Live Coded Performance joining the Livecode.nyc performance block (online)

- 31/01/2020 Hannah Allen - playback engineer and live vocal processing, Caffeine Underground (BK, NY)

2019:

- 19/11/2019 Sounds Delicious: A Food Fantasia by Joy Lee - Multimedia Performance: Live coding and live sampling and real time sound processing of food preparation and eating

- 05/10/2019 FALLGORAVE - Live Coded Performance at the fall algorave organized by Livecode.nyc at Wonderwille (BK, NY)

- 29/09/2019 Kairos - a Haskell Library for Live Coding Csound Performances - Prepared talk for the 5th International Csound Conference (Cagli,IT)

- 13/09/2019 Modular on the Spot - Eurorack Performance, event organized by Signal Flux at Nowadays (BK, NY)

- 06/08/2019 CHIAROSCURO by Joy Lee - Multimedia Performance: Played modular synth, live sound processing and live coding (BOS, MA)

- 02/05/2019 Modular on the Spot - Eurorack Performance for the second Berklee Modular on the Spot (BOS, MA)

- 29/04/2019 The Fog by Joy Lee - Performed modular synthesisers for the Society of Composers 2019 Spring Concert (BOS, MA)

- 20/04/2019 Digital Forest by Nona Hendryx and Will Calhoun - Multimedia Performance: played Eurorack and custom DSP effects and generators manipulated by the audience through OSC (BOS, MA)

2018:

- 08/08/2018 Samsara by A2DaC - Multimedia Performance: developed custom max4live devices and assisted as show tech (BOS, MA)

- 03/05/2018 Modular on the Spot - Eurorack Performance for the first Berklee Modular on the Spot (BOS, MA)

- 26/04/2018 Matt & Kim, AJNA - Performed live audio reactive visuals for AJNA's show at House of Blues, (BOS,MA)

- 19-21/04/2018 A Rose Out Of Concrete by Nona Hendryx and Hank Shocklee - Multimedia Performance: live digital signal processing of dancers' movements and custom synth performance (BOS, MA)